Post by : Amit

Photo: Reuters

June 2025 — In an era where artificial intelligence has swiftly embedded itself into the core of industries worldwide, from manufacturing to cybersecurity, a growing chorus of experts is sounding the alarm over an equally urgent need: the establishment of robust security governance frameworks. Without clear and enforceable rules guiding the development, deployment, and oversight of AI systems, what is now viewed as a transformative asset could quickly spiral into a dangerous liability, threatening not just businesses but society at large.

The warnings come at a time when AI is no longer confined to tech companies or research labs. It has become the digital engine behind everything from predictive maintenance in factories to fraud detection in financial services and customer service automation in retail. Yet, according to recent surveys, while 66% of manufacturing organizations now actively depend on AI technologies for daily operations, an astonishing 95% admit they lack adequate governance structures to manage associated risks. This troubling imbalance has sparked fresh concerns over cyber vulnerabilities, operational inaccuracies, and the looming specter of regulatory non-compliance.

At the heart of this challenge lies the tension between the breakneck speed of AI innovation and the slower, more methodical pace of policy development. Companies, eager to capitalize on AI's ability to cut costs, optimize efficiency, and drive growth, often roll out new technologies without fully considering the hidden risks embedded within the systems. The result is a digital ecosystem where AI tools operate with limited oversight, sometimes making critical decisions that affect human lives, financial markets, and national security—all without clear accountability or safeguards in place.

One of the most pressing concerns is the amplification of existing cyber vulnerabilities through AI systems. As organizations integrate AI deeper into their operations, they inadvertently expand their attack surface. In manufacturing environments, for instance, the rise of AI-powered predictive maintenance and quality control has led to a 20% increase in undocumented external connections—shadow endpoints that create backdoors into sensitive factory networks. These weak spots are often invisible to traditional security teams, making them ripe targets for sophisticated cybercriminals or state-sponsored hackers.

Further compounding the risk is the phenomenon of "shadow AI," a term that refers to employees or departments deploying AI tools without the knowledge or approval of their organization's IT or cybersecurity teams. This unsanctioned use creates blind spots in data governance, increasing the risk of sensitive information being mishandled, leaked, or inadvertently shared with third-party AI vendors whose data practices may not align with company or regulatory standards. Shadow AI not only undermines security but can also expose businesses to regulatory penalties, especially as data protection laws tighten across jurisdictions worldwide.

Against this backdrop, leading industry voices are calling for a dramatic shift in how organizations view AI governance—not as a bureaucratic hurdle but as a strategic imperative. Abhi Sharma, a global technology strategist, argues that governance can serve as a competitive advantage rather than a constraint. "Organizations that build their AI capabilities on a foundation of security, transparency, and accountability will not only avoid catastrophic risks but will also foster greater innovation," Sharma noted. "AI without governance is a liability. AI with governance becomes a trusted, value-generating asset."

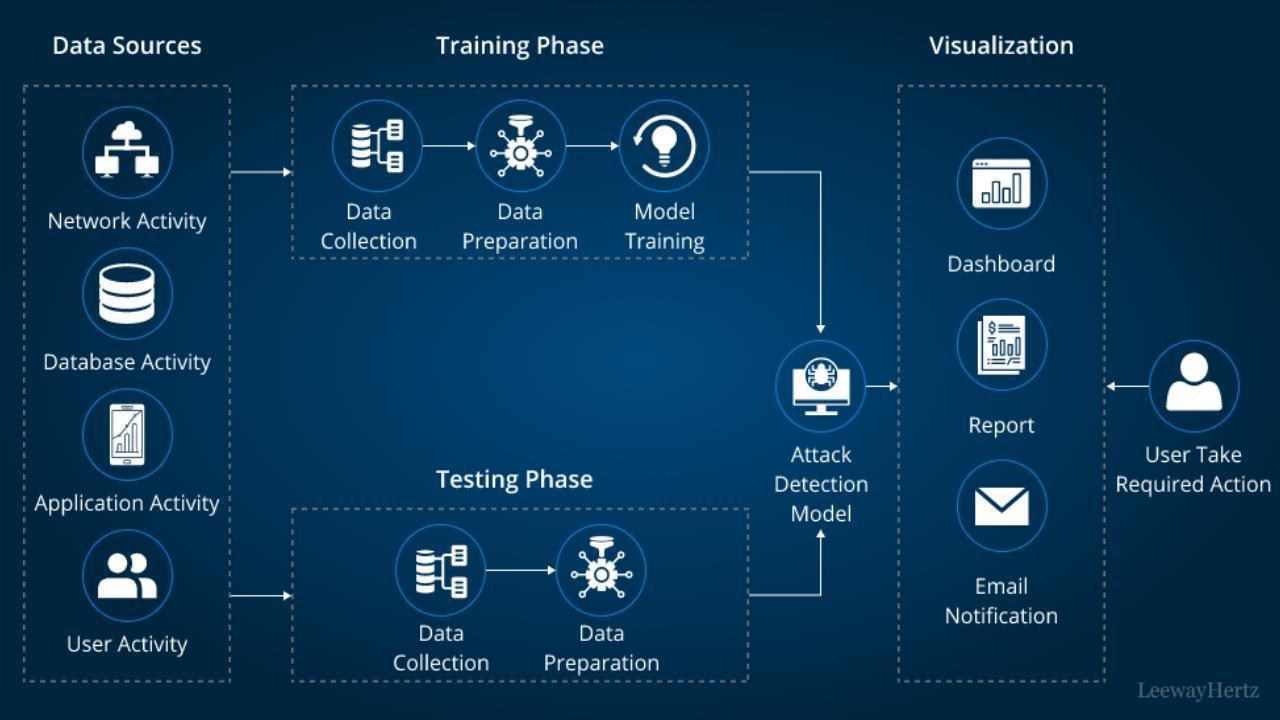

To achieve this balance between innovation and protection, experts are advocating for a proactive "trust but verify" approach to AI deployment. This model emphasizes centralized risk management, where AI systems are treated as critical assets subject to the same scrutiny as financial systems or safety protocols. It also calls for the adoption of zero-trust security principles, which assume no internal or external system is inherently safe and require continuous verification of every user, device, and process interacting with AI tools.

Another cornerstone of this model is the push for explainable and auditable AI outputs. As machine learning models grow more complex, their decision-making processes can become opaque even to their own developers—a phenomenon known as the "black box" problem. Ensuring that AI systems can produce transparent, understandable explanations for their decisions is essential for maintaining accountability and enabling human oversight, especially in sectors such as healthcare, finance, and law where lives and livelihoods are at stake.

Continuous monitoring and third-party auditing also play vital roles in safeguarding AI ecosystems. Rather than relying on one-time assessments or static policies, organizations must implement dynamic, real-time monitoring of AI behavior to detect anomalies, prevent data misuse, and ensure compliance with both internal guidelines and external regulations. Independent audits can further enhance trust by providing objective evaluations of how AI systems are performing and whether they align with ethical standards.

The importance of these governance measures is underscored by recent high-profile AI failures that have caused public backlash and financial damage. From biased hiring algorithms that discriminated against applicants to faulty AI in healthcare systems that misdiagnosed patients, the consequences of ungoverned AI have become increasingly visible. Such incidents have eroded public trust and spurred regulators to consider stricter oversight, including mandatory AI impact assessments and penalties for non-compliance.

In response, governments in the European Union, the United States, and Asia are racing to develop regulatory frameworks that will impose clearer boundaries on AI use. The EU’s Artificial Intelligence Act, for example, seeks to classify AI systems by risk level and impose strict obligations on high-risk applications. Meanwhile, in the U.S., federal agencies are exploring guidelines to ensure AI transparency and accountability in both private and public sectors.

Yet regulation alone will not suffice. The private sector must take proactive responsibility for the ethical and secure development of AI technologies. This means investing not only in cutting-edge algorithms but also in governance structures, workforce training, and cross-industry collaboration. Leaders must view governance as a catalyst for long-term success, not a roadblock to short-term gains.

In the end, the AI revolution presents a dual-edged sword: unparalleled potential for innovation on one side, and unprecedented risk on the other. Organizations that succeed will be those that recognize the inseparability of AI and governance and act decisively to embed both into their strategic DNA. The message from experts is clear: the future belongs to those who build not just faster or smarter AI—but AI that is trustworthy, secure, and human-centered.

AI governance framework security

Advances in Aerospace Technology and Commercial Aviation Recovery

Insights into breakthrough aerospace technologies and commercial aviation’s recovery amid 2025 chall

Defense Modernization and Strategic Spending Trends

Explore key trends in global defense modernization and strategic military spending shaping 2025 secu

Tens of Thousands Protest in Serbia on Anniversary of Deadly Roof Collapse

Tens of thousands in Novi Sad mark a year since a deadly station roof collapse that killed 16, prote

Canada PM Carney Apologizes to Trump Over Controversial Reagan Anti-Tariff Ad

Canadian PM Mark Carney apologized to President Trump over an Ontario anti-tariff ad quoting Reagan,

The ad that stirred a hornets nest, and made Canadian PM Carney say sorry to Trump

Canadian PM Mark Carney apologizes to US President Trump after a tariff-related ad causes diplomatic

Bengaluru-Mumbai Superfast Train Approved After 30-Year Wait

Railways approves new superfast train connecting Bengaluru and Mumbai, ending a 30-year demand, easi